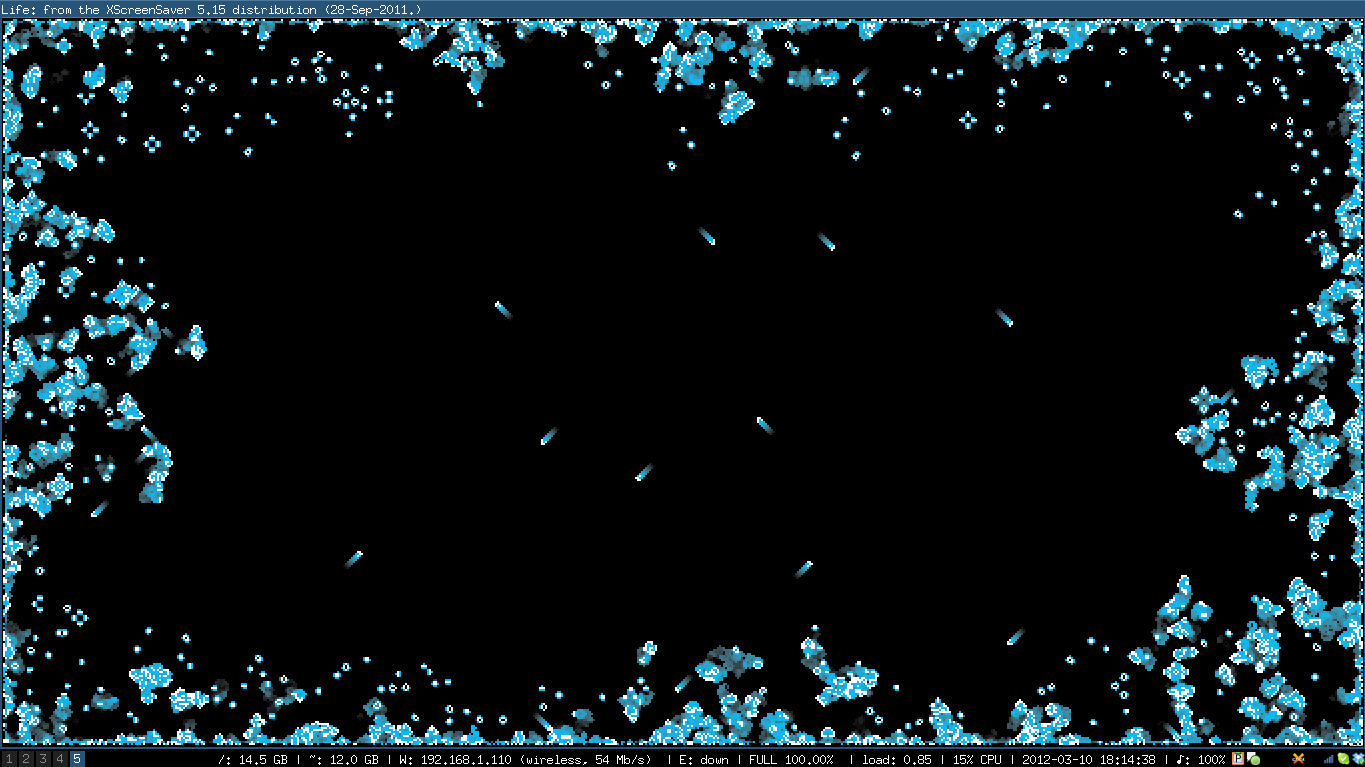

Recently, I promised you that I'd write my own screensaver. Well, here it is - an implementation of [cached]Conway's Game of Life using [cached]xscreensaver and OpenGL. It's based on the Python implementation I wrote, but quite a bit faster thanks to some trickery. Here's how it looks like:

Since I need to blit a lot of pixels for each frame (all changed cells + all those that died recently and are fading away), I decided to use an OpenGL texture for drawing. That way, my dense array gets painted directly to the screen. To avoid unecessary copying, I've interleaved the game state with the texture data.

The texture itself is a one-dimensional array of unsigned int (one byte for RGBA each), but I add additional pointers so I can use it like a normal 2D-Array. height-first (y-first) is important so OpenGL draws correctly.

1 2 3 4 5 6 7 8 9 10 11 | |

Now I don't really need an Alpha channel, and so I have space to store my game state information:

1 2 | |

What's with the 4 bits for alive and neighbor status each? To speed up calculation of the next generation, I don't check for alive neighbors for each cell, but rather save in the cell itself how many of its neighbors are alife. This cached value only needs to change when one of the neighbors dies / is born, which doesn't happen very often.

The alive status would only need 1 bit, but I use 4 for animation, so I can fade out newly deceased cells over a few frames. All 4 bits one is alive (0xf), everything else is dead and also an index into my color array. This status is also used when updating cells - we don't need to check if an already alive cell will start to live, and vice versa:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 | |

Maybe you've also noticed the comment about padding in the very first source snippet - this is purely so we don't need any modulo operations when setting alive/dead status in our neighbors.

As always, [cached]the source is on GitHub, check it out and play with it!

Tags: programming, graphics